Presented By: Michigan Robotics

Learning Structured Reward Representations for Reinforcement Learning

PhD Defense, Mohamad Louai Shehab

Committee Chair: Necmiye Ozay

Abstract:

Learning from demonstrations is one of the most natural and powerful paradigms for acquiring intelligent behavior. Among its most prominent formulations is inverse reinforcement learning (IRL), which has achieved remarkable success in domains such as robotics and autonomous driving. Yet despite this promise, IRL remains fundamentally challenged by ill-posedness: the same observed behavior can often be explained by many different underlying objectives. If an agent moves from point A to point B, is it because it is attracted to B, repelled by A, or optimizing something more subtle entirely? From behavior alone, the answer is generally ambiguous.

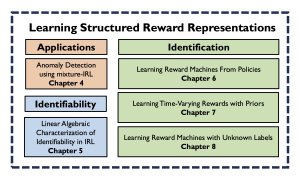

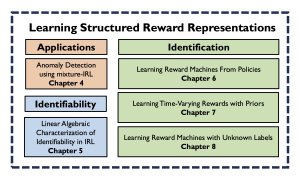

This dissertation addresses several foundational questions at the heart of inverse reinforcement learning. First, it investigates which environments and dynamics are inherently suitable for learning from demonstrations. Second, it studies how "rationality priors" can be incorporated to regularize the ambiguity of IRL and improve identifiability. Finally, it develops methods for inferring latent memory structure directly from demonstrations, enabling classical IRL techniques to extend beyond Markovian assumptions to the richer setting of multi-stage tasks with history-dependent, non-Markovian rewards.

Robotics Atrium & on Zoom (Passcode: 19982001)

Abstract:

Learning from demonstrations is one of the most natural and powerful paradigms for acquiring intelligent behavior. Among its most prominent formulations is inverse reinforcement learning (IRL), which has achieved remarkable success in domains such as robotics and autonomous driving. Yet despite this promise, IRL remains fundamentally challenged by ill-posedness: the same observed behavior can often be explained by many different underlying objectives. If an agent moves from point A to point B, is it because it is attracted to B, repelled by A, or optimizing something more subtle entirely? From behavior alone, the answer is generally ambiguous.

This dissertation addresses several foundational questions at the heart of inverse reinforcement learning. First, it investigates which environments and dynamics are inherently suitable for learning from demonstrations. Second, it studies how "rationality priors" can be incorporated to regularize the ambiguity of IRL and improve identifiability. Finally, it develops methods for inferring latent memory structure directly from demonstrations, enabling classical IRL techniques to extend beyond Markovian assumptions to the richer setting of multi-stage tasks with history-dependent, non-Markovian rewards.

Robotics Atrium & on Zoom (Passcode: 19982001)