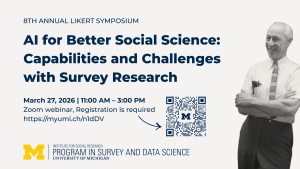

Presented By: Institute for Social Research

AI for Better Social Science: Capabilities and Challenges with Survey Research

The 8th Annual Likert Symposium

Register to receive Zoom link at https://myumi.ch/n1dDV

Program:

AI-Assisted Conversational Interviewing: Effects on Data Quality and Respondent Experience

Soubhik Barari, Senior Research Methodologist at NORC at the University of Chicago, working on applied problems at the intersection of survey methodology, data science, and AI.

Abstract: Standardized surveys scale efficiently but often lack depth, while conversational interviews improve response quality but are costly and inconsistent. This study advances a framework for AI‑assisted conversational interviewing to bridge this divide and demonstrates evidence of its practical utility. In a web survey experiment with 1,800 participants, we deployed AI “chatbots” powered by large language models to probe respondents for elaboration and to code open‑ended answers in real time. The chatbots achieved reasonably strong accuracy in live coding, though they exhibited some over-identification of themes, partly reflecting respondents’ tendency to agree with AI suggestions. They elicited richer, more detailed responses compared to standard surveys, with only small trade‑offs in respondent experience. Overall, our results demonstrate that AI‑supported conversational tools can meaningfully enhance the depth and quality of open‑ended data collection, pointing to a new generation of scalable, adaptive survey methods.

LLMs as Synthetic Respondents: A Tool for Augmenting Human Surveys

Serina Chang, Assistant Professor at UC Berkeley, jointly appointed in EECS and Computational Precision Health and part of the Berkeley AI Research (BAIR) Lab.

Abstract: Surveys are an invaluable resource for understanding human opinions and behaviors, but they require substantial time and effort. Large language models (LLMs) present new opportunities to predict responses to survey questions, given their natural language abilities and social knowledge acquired during pretraining. Integrating LLMs as synthetic respondents into survey pipelines could improve efficiency across multiple stages—from pre-survey pilot testing and sampling design to post-survey data imputation and analysis—not as a replacement for human participants but as a way to augment existing workflows. However, these opportunities also raise new challenges, including how to accurately reflect the opinions of diverse subpopulations, generalize to questions outside LLM training data, and reduce computational costs. In this talk, I describe how we address these challenges in our work. First, we show that fine-tuning LLMs on survey data substantially improves their ability to predict public opinion, generalizing to unseen surveys and subgroups. In complementary work, rather than fine-tuning with additional data, we leverage knowledge already encoded in the LLM, showing with probes and sparse autoencoders that models contain far more internal knowledge of human opinions than their outputs reveal. Finally, we present graph-based approaches as a lightweight alternative: a graph model equipped with language representations from LLMs—but requiring no further LLM training or inference—can match LLM performance on some survey tasks while using orders of magnitude less compute.

Validation and Inference for Survey Research Using Silicon Sampling

Lisa Argyle, Associate Professor of Political Science at Purdue

Abstract: In both research and commercial applications, AI is increasingly being used to create synthetic representations of humans. This raises at least two important questions for scientists as they consider the integration of this new tool: How can a researcher know if the silicon survey output is valid? And how can a researcher make new inferences on the basis of synthetic output? Drawing on a synthesis of research on silicon sampling, I discuss the intellectual foundations of this approach, the ongoing practical and theoretical challenges, and cutting edge methodological tools. I conclude by proposing some best practices for conducting transparent open science using large language models.

Validating LLM simulations as behavioral evidence

Jessica Hullman, Ginni Rometty Professor of Computer Science and Faculty Fellow at the Institute for Policy Research at Northwestern University.

Abstract: A growing literature positions AI, and especially large language models (LLMs), as a transformative technology for simulating human behavior in surveys and experiments. This promise sparks a debate over how best to leverage this new data source. While a growing number of empirical studies report that LLM-simulated responses can approximate patterns found in real survey and experimental data, there is little consensus on what it means to show that AI surrogates are valid for the purpose of studying human behavior. I’ll contrast heuristic approaches to validation in the literature from statistical calibration approaches that account for biases learned on items for which human ground truth labels are known. While calibration approaches provide guarantees of the type that social scientists are accustomed to expecting from statistical methods, they are not a panacea. I’ll describe limitations based on the nature of behavioral data and reflect on meta-scientific questions that arise about the epistemic status of silicon samples as behavioral data.

Discussant: Ambuj Tewari, Professor, Department of Statistics, University of Michigan, Ann Arbor

Program:

AI-Assisted Conversational Interviewing: Effects on Data Quality and Respondent Experience

Soubhik Barari, Senior Research Methodologist at NORC at the University of Chicago, working on applied problems at the intersection of survey methodology, data science, and AI.

Abstract: Standardized surveys scale efficiently but often lack depth, while conversational interviews improve response quality but are costly and inconsistent. This study advances a framework for AI‑assisted conversational interviewing to bridge this divide and demonstrates evidence of its practical utility. In a web survey experiment with 1,800 participants, we deployed AI “chatbots” powered by large language models to probe respondents for elaboration and to code open‑ended answers in real time. The chatbots achieved reasonably strong accuracy in live coding, though they exhibited some over-identification of themes, partly reflecting respondents’ tendency to agree with AI suggestions. They elicited richer, more detailed responses compared to standard surveys, with only small trade‑offs in respondent experience. Overall, our results demonstrate that AI‑supported conversational tools can meaningfully enhance the depth and quality of open‑ended data collection, pointing to a new generation of scalable, adaptive survey methods.

LLMs as Synthetic Respondents: A Tool for Augmenting Human Surveys

Serina Chang, Assistant Professor at UC Berkeley, jointly appointed in EECS and Computational Precision Health and part of the Berkeley AI Research (BAIR) Lab.

Abstract: Surveys are an invaluable resource for understanding human opinions and behaviors, but they require substantial time and effort. Large language models (LLMs) present new opportunities to predict responses to survey questions, given their natural language abilities and social knowledge acquired during pretraining. Integrating LLMs as synthetic respondents into survey pipelines could improve efficiency across multiple stages—from pre-survey pilot testing and sampling design to post-survey data imputation and analysis—not as a replacement for human participants but as a way to augment existing workflows. However, these opportunities also raise new challenges, including how to accurately reflect the opinions of diverse subpopulations, generalize to questions outside LLM training data, and reduce computational costs. In this talk, I describe how we address these challenges in our work. First, we show that fine-tuning LLMs on survey data substantially improves their ability to predict public opinion, generalizing to unseen surveys and subgroups. In complementary work, rather than fine-tuning with additional data, we leverage knowledge already encoded in the LLM, showing with probes and sparse autoencoders that models contain far more internal knowledge of human opinions than their outputs reveal. Finally, we present graph-based approaches as a lightweight alternative: a graph model equipped with language representations from LLMs—but requiring no further LLM training or inference—can match LLM performance on some survey tasks while using orders of magnitude less compute.

Validation and Inference for Survey Research Using Silicon Sampling

Lisa Argyle, Associate Professor of Political Science at Purdue

Abstract: In both research and commercial applications, AI is increasingly being used to create synthetic representations of humans. This raises at least two important questions for scientists as they consider the integration of this new tool: How can a researcher know if the silicon survey output is valid? And how can a researcher make new inferences on the basis of synthetic output? Drawing on a synthesis of research on silicon sampling, I discuss the intellectual foundations of this approach, the ongoing practical and theoretical challenges, and cutting edge methodological tools. I conclude by proposing some best practices for conducting transparent open science using large language models.

Validating LLM simulations as behavioral evidence

Jessica Hullman, Ginni Rometty Professor of Computer Science and Faculty Fellow at the Institute for Policy Research at Northwestern University.

Abstract: A growing literature positions AI, and especially large language models (LLMs), as a transformative technology for simulating human behavior in surveys and experiments. This promise sparks a debate over how best to leverage this new data source. While a growing number of empirical studies report that LLM-simulated responses can approximate patterns found in real survey and experimental data, there is little consensus on what it means to show that AI surrogates are valid for the purpose of studying human behavior. I’ll contrast heuristic approaches to validation in the literature from statistical calibration approaches that account for biases learned on items for which human ground truth labels are known. While calibration approaches provide guarantees of the type that social scientists are accustomed to expecting from statistical methods, they are not a panacea. I’ll describe limitations based on the nature of behavioral data and reflect on meta-scientific questions that arise about the epistemic status of silicon samples as behavioral data.

Discussant: Ambuj Tewari, Professor, Department of Statistics, University of Michigan, Ann Arbor